If greater, it is attached to the end of the sorted sub-array.

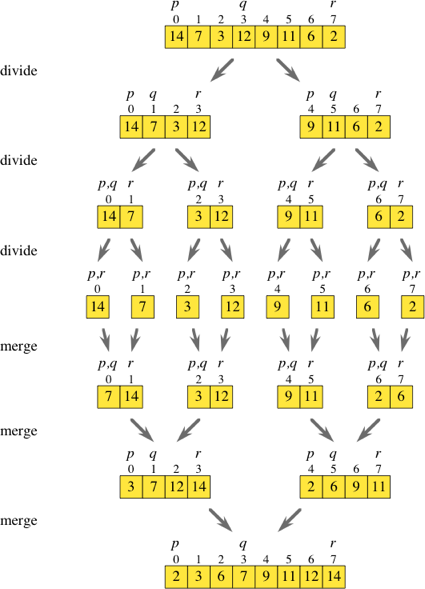

The first element of the unsorted array (i.e., the key element) is compared with the largest (i.e., last) element of the sorted sub-array. The algorithm works by growing/shrinking the sorted/unsorted sub-arrays. Initially, the sorted sub-array consists of only the first element of the original array. Elements in the unsorted sub-array need to find their appropriate place in the sorted one and be inserted there (hence the name insertion sort). At each step, the array consists of two sub-arrays: a sorted one and an unsorted one. Insertion sort: It is an in-place comparison-based sorting algorithm. Continue merging until you are left with only one array. Its worst-case time complexity is O(n log n), which is the same as Quicksort's best case, and the best case takes about half as many iterations as the worst case. Next, compare the lists of two values, and merge them into a list of four values placing all in sorted order. Merge every two adjacent elements into a sorted array of size two. Merge sort: Given an unsorted array, recursively divide it into two halves (without changing the order of elements) until no further division can be made (i.e., until you reach individual elements). It is proven that the average time complexity is also O(nlog(n)). The time complexity of Quicksort is between O(n2) (i.e., worst case, which occurs when the pivot is always the smallest or the largest element) and O(nlog(n)) (i.e., the best case, which occurs when the pivot is always the middle element). Recursively call Quicksort on the two sub-arrays until you reach the base case: sub-array of only two elements or an empty sub-array. Partition the array by finding the elements that are smaller/larger than the pivot and putting them in sub-arrays left/right. First, pick an element from the array and call it the pivot. Quicksort: It is an example of D and C algorithm. This algorithm is very inefficient because it has a time complexity of O(n2). Continue until the original list is empty. Selection sort: given a list, scan it, find the smallest item, put that item as the first element of a new and sorted list and remove it from the original list.

An ancient divide-and-conquer algorithm is the Euclidean algorithm that can be used to find the Greatest Common Divisor (GCD) of two integersĪs the name suggests, these are algorithms that take a sequence of numbers and sort them. One of the main advantages of these algorithms is that they are easy to parallelize. It is like divide to get to the base and conquer by solving that base. During recursion, each call adds to the stack's top until the base case is reached (see for more examples).Ī Divide-and-conquer (D and C) algorithm is an algorithm that breaks down a problem into two or more sub-problems using recursion until these sub-problems reach the base of the recursion. It then continues the execution from where the call was made. When the function ends, Python looks at the top of the stack, either restoring the parameters and local variable's values or removing information from the top.

That function can (potentially) make additional calls adding further information to the stack's top.

Then it makes the function call and switches execution to the start of the call function. These include the value of parameters, local variables, the location where the function call is made. Each time a Python code makes a function call, Python puts some information on the call stack. Understanding recursion requires one to understand Python's call stack mechanism. For a nice discussion comparing recursion and iteration, see this Stack Overflow post Still, the beauty of recursion is that it makes the implementation clear. However, this conversion sometimes may be tough, and it may hurt the performance of the program. At every repetition, the size of the inputs reduces, and the program moves towards the base case.Įvery iterative loop (such as for or while loops) can be replaced with a recursive function. In the base case, results are computed immediately, while in the recursive steps, they are computed by making one or multiple calls to the same function. Every recursive function consists of a base case and recursive steps. In Computer Science, a recursive function is one that calls itself repeatedly. Recursion is the process of self-similar repetition. Recursion and Divide and Conquer (D and C)

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed